Value Stream Measurement

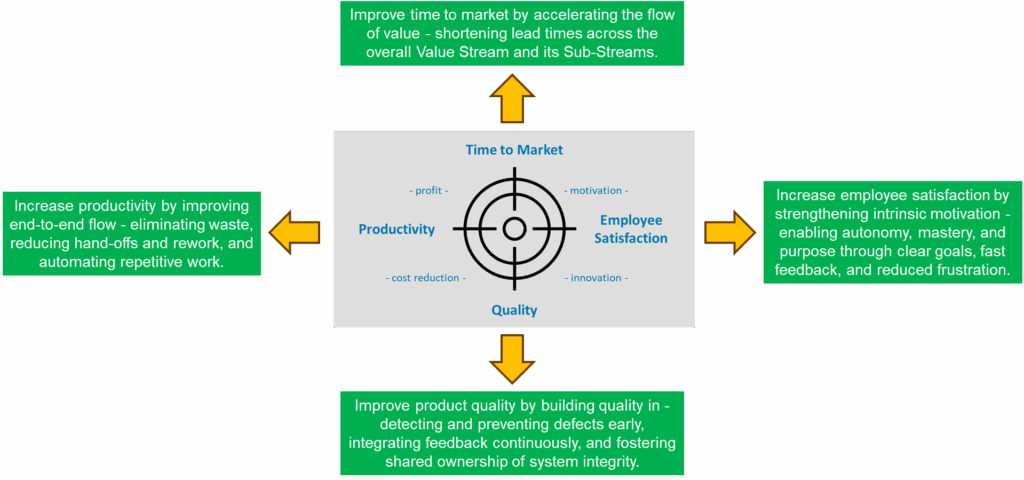

This article introduces the third stage of the Value Stream Lifecycle – Value Stream Optimization – focusing on how to design and operate a measurement system. It explains how OKRs, KPIs, Metrics, and Measures connect strategic goals with operational improvement, using Time to Market as a practical example. Readers learn how to turn measurement into a driver of learning, improvement, and sustainable performance.

„Without data, you’re just another person with an opinion.“

— Attributed to W. Edwards Deming1

Stage Three of the Value Stream Lifecycle

Value Stream Optimization marks the third stage in our value stream lifecycle. After identifying and understanding the value stream, we reorganized it around value to build a solid foundation for performance. The next step is to systematically improve that performance and unlock the value stream’s full potential. To do this effectively, we first need to define what “optimized” actually means — by establishing a clear measurement system. Such a system provides the data to determine whether improvement is really happening and to identify exactly where in the value stream further optimization is needed. With this insight, we can analyze, learn, and continuously enhance the flow of value.

Measuring the Performance of a Value Stream

A value stream operates with a set of parameters that indicate how well it performs. To understand and improve its performance, these parameters must be measured. Simple measurements can provide an initial snapshot of the current state, but systematic optimization requires tracking performance over time to see whether – and how quickly – real improvement occurs. For this to be effective, the data itself must be reliable and relevant. We need sufficient data quality, the ability to measure what truly matters, and the confidence and support of stakeholders who trust the results. Only then can the measurement system deliver the insights required for meaningful improvement.

Establishing the Measurement System

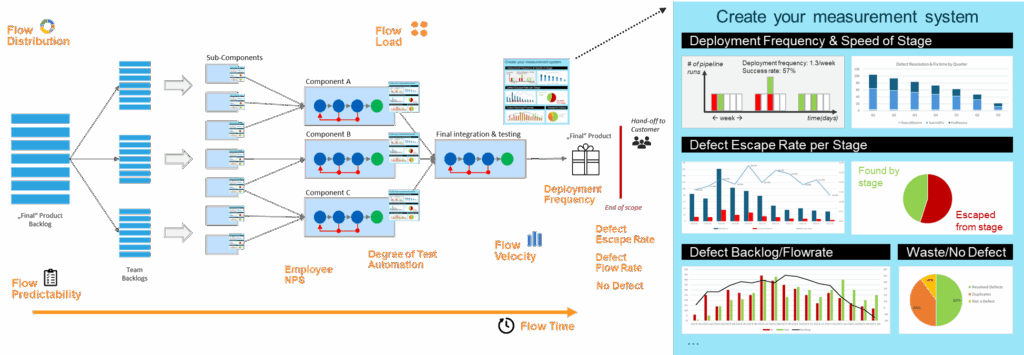

An effective measurement system must deliver insights into how performance evolves over time and provide dashboards and analysis tools that enable teams to explore, validate, and interpret the underlying data. The picture below shows examples of measures used within a value stream.

Establishing the Measurement System

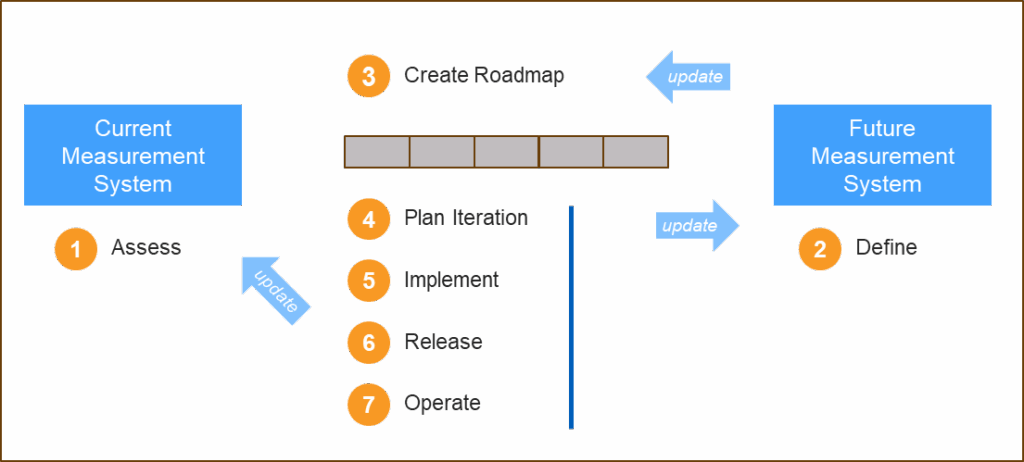

Building a measurement system is much like developing a product – in this case, the product is the measurement system itself. We begin by (1) assessing the current system to understand what already exists, then (2) define the desired future system. This future system must clearly specify what to measure and include the necessary tools and infrastructure to collect, calculate, and visualize the resulting insights. Based on this, we (3) create a roadmap and (4) plan the first iteration to move from the current state toward the future state. Next, we (5) implement, (6) release, and (7) operate the updated system – continuously collecting feedback and insights to refine the roadmap and plan subsequent iterations.

Step 1: Current State Assessment

When defining the measurement system, all performance parameters should be listed and evaluated for their suitability. This evaluation considers three key aspects:

Current Measures: What indicators already exist, and how well do they reflect performance of the value stream?

Supporting questions:

- What measures do we have?

- How well do they give us the required insights?

- How could they be refined, replaced, or complemented to provide better insights?

- What other measures should we have?

Measurability: How feasible, accurate, and consistent are the measurements?

Supporting Questions:

- Definition: Do we have a clear and consistent definition of how each metric is measured?

- Data Availability: Do we have the required data, and do we have access to the necessary data sources?

- Data Quality: Is the data quality sufficient to support reliable insights?

- Effort and Cost: What are the costs and efforts associated with measuring?

- Timeliness: How frequently can we update the data to enable timely decisions?

- Ownership: Who is responsible for maintaining and validating this metric?

- Automation: Can data collection or visualization be automated to reduce manual effort and error?

- Privacy and Ethics: Are there any compliance or data-protection concerns?

Confidence in Data: How trustworthy and transparent are the results, and how do people perceive them?

Supporting Questions:

- Trust and Credibility: Is the data trustworthy – do people affected by it believe it accurately reflects reality?

- Transparency and Traceability: Can people understand and validate how the metric is calculated and what data was used?

- Is the process transparent and reproducible?

- Behavioral Impact: How might this measurement influence behavior? Could it lead to gaming, superficial improvements (“greenifying”), or non–value-adding activities?

Step 2: Future State Definition

After agreeing and documenting the current state, the future state is defined. This can be achieved by implementing the agreed improvements directly or, more systematically, by defining a hierarchy of OKRs, KPIs, Metrics, and Measures.

By defining Objectives and Key Results (OKRs)2, we connect the strategic goals of the value stream3 with concrete improvement efforts, ensuring that objectives, funding, and measurable outcomes support one another. OKRs define what we want to achieve and how we will measure success. They are goal-oriented, time-bound, and often ambitious, driving change and improvement.

Example for Time to Market, one of the performance parameters.

|

Objective: Improve development flow to enable faster and more predictable delivery of vehicle functions. |

The Objective focuses on improving development flow to enable faster and more predictable delivery of vehicle functions. Combining flow and predictability ensures that the value stream becomes not only faster but also more stable and reliable – speed without consistency would only shift problems downstream. The three Key Results complement one another by providing a comprehensive view of flow performance across the development value stream. Average Flow Time captures the speed of delivery – how quickly work items move from start to integration readiness. Delivery Predictability adds the dimension of stability, showing how consistently teams deliver within their planned timeframes. Integration Readiness Adherence ensures coordination and system maturity by tracking whether components are delivered on time and can be smoothly integrated. Viewed together, these Key Results balance speed, reliability, and synchronization, ensuring that improvements in one area do not come at the expense of another. This integrated perspective enables the organization to achieve its objective of faster and more predictable delivery of vehicle functions.

Key Performance Indicators (KPIs), track how the system performs on an ongoing basis. They are performance indicators, used to monitor stability and health rather than to set new goals.

|

KPI1: Average Flow Time for Features: Calendar days between work start and integration readiness. |

These KPIs directly support the defined OKRs by translating the strategic goal of improving development flow into measurable operational outcomes. Flow Time per Feature captures the speed of delivery, showing how long it takes for a feature to move from start to integration readiness and thus directly supports the reduction of average flow time. On-Time Delivery Ratio measures the stability and reliability of the process by indicating how consistently teams deliver within planned timeframes, aligning with the goal of improving delivery predictability. Integration Frequency reflects the cadence and coordination of system integration, showing how often components are ready and successfully integrated, thereby supporting the objective of increasing integration readiness. Together, these KPIs provide a balanced view of speed, predictability, and synchronization – three essential dimensions for achieving faster and more predictable delivery of vehicle functions.

Metrics are process-level indicators that reveal the underlying dynamics of the value stream. They describe how work flows through the system, where delays occur, and how consistently teams deliver. By analyzing metrics, we can identify bottlenecks, waiting times, and inefficiencies that influence the KPIs and, ultimately, the OKRs.

|

Metric 1: Feature Cycle Time: The elapsed time it takes to complete a specific work item within a defined process scope (e.g., design–build–test). |

Feature Cycle Time measures the elapsed time required to complete a specific work item within a defined process scope, such as design–build–test, and helps identify where time is lost within the flow. Flow Time Variability captures the statistical variation of Flow Time across features, indicating how consistently the value stream delivers and highlighting instability or uneven workloads when variability is high. Integration Success Rate per Time Period measures the proportion of successful integration attempts within a defined timeframe, combining cadence and reliability to show how often and how effectively components are integrated into the next stage. Together, these metrics provide the operational insight needed to improve flow speed, stability, and predictability across the value stream.

Measures are the foundation of the measurement system. They represent the raw data points collected from tools and processes – the factual evidence of how work progresses through the value stream. Each measure is objective and precise, such as timestamps, counts, or success rates, and serves as input for calculating metrics. While metrics describe how the system performs, measures provide the data that makes this analysis possible.

|

Measure 1: ID of each feature, with start and end dates for implementation per process scope. |

The list of measures shown here is not exhaustive – additional data points may be required depending on the context, available systems, or specific questions being analyzed. Measures can also include attributes that allow slicing and dicing of the data, such as feature type, integration level, or component category, enabling deeper insight into patterns, trends, and improvement opportunities across the value stream.

Defining the future state is always context-specific, as every value stream operates under different goals and conditions. The example shown here provides a starting point for your own discussions and design. It illustrates the key elements and relationships that a well-structured measurement system should include, helping you understand how such a definition can look in practice.

Step 3: Create Roadmap

Once all OKRs, KPIs, metrics, and measures are defined, the next step is to plan how to move from the current state to the desired future state. Depending on the gap between the two, this may require several iterations and even a dedicated roadmap.

Experience shows that these steps are rarely linear – measurement capability and data quality often need time to mature. In the meantime, proxy measures or temporary compromises may be necessary.

The implementation of a measurement system typically demands continuous feedback from its users and multiple refinement cycles before the data becomes truly meaningful. These feedback loops are both expected and essential – managing expectations throughout this process is key to success. Finally, remember that inaccurate or misleading data can undermine trust and jeopardize the entire measurement initiative, so handle and communicate data with great care.

Step 4: Plan Iteration

This step follows the same principles as any product development project: define a backlog and let the team plan the iteration.

Step 5: Implement

This is where theory meets reality. What data is actually available and accessible? Is its quality sufficient for meaningful analysis? As soon as you begin working hands-on with your tools and systems, you’ll likely uncover the need for adjustments: adapting data types, converting free-text fields into selection lists, refining status models, emphasizing to teams the importance of accurate updates, making key fields mandatory, cleaning and normalizing existing data, or even consolidating a heterogeneous tool landscape. Each of these insights will influence not only what you want to measure, but also what is possible to measure.

The implementation of Metrics and KPIs follows the classical Plan–Do–Check–Act (PDCA) cycle: plan the approach, implement a first version or prototype, validate the results, and apply the learnings to build an improved version — repeating the cycle until the solution is ready for release. While this may sound simple, a successful implementation requires significant expertise. Building such a solution is an active process of data analysis and knowledge discovery about both the process and its underlying data. Ultimately, the speed of feedback loops determines success. Don’t wait for feedback — make it an integral and continuous part of the development process.

The solution is not only about delivering the KPI or metric itself, but also about ensuring transparency and traceability of the results. Users should be able to understand which data was used and how each calculation was performed4. Their process knowledge is essential for correctly interpreting the results and validating whether the data truly reflects reality.

During or after implementation, update your Current State and Future State Assessments as well as the Roadmap to reflect the latest insights and progress.

Step 6: Release

Never release a new metric or KPI before you are confident that people trust the data and understand its meaning. There is no faster way to undermine a measurement initiative than by presenting questionable or unreliable data. Always validate the results with the affected stakeholders and ensure they confirm that the data is accurate and meaningful for interpretation and performance tracking. When extending the scope of a KPI or metric to additional departments or value streams, verify that the underlying data is equally reliable for those contexts. Different teams may follow different processes or use data fields in varying ways. To manage this safely, consider using feature toggles or controlled rollouts to make data visible only to the relevant audience until its quality and interpretation are fully validated.

Step 7: Operate

Operating the measurement system is no different from running any other IT system. Ensure that it is reliable, has proper access controls, and delivers good performance. Make sure users know where to turn when they have questions or encounter issues. Don’t hide behind an anonymous ticket system — know your users, listen to their feedback, and show empathy for their needs. A trusted measurement system is not only technically sound but also supported by a responsive and human-centered operation. It’s not just about showing data, but about providing a tool to analyze it, learn from it, and continuously improve the value stream.5

Conclusions

Building and operating a measurement system is an ongoing journey of learning, validation, and improvement. Its true value lies not only in producing KPIs or metrics but in creating shared understanding, transparency, and actionable insight across the value stream. A well-designed system connects strategic intent with operational reality, enabling teams to make data-driven decisions and continuously refine how value flows. As the organization evolves, so should the measurement system – adapting to new goals, maturing data quality, and expanding trust in the results. Ultimately, measurement is not about reporting performance – it is about enabling improvement and helping the value stream realize its full potential..

References

- While this quote is often attributed to W. Edwards Deming and clearly reflects his philosophy, there is no validated source for it — the same applies to “In God we trust; all others must bring data.” Still, I like both, as they nicely capture the essence of measurement and learning. ↩︎

- More information about OKRs can be found in:

Doerr, John (2018). Measure What Matters: How Google, Bono, and the Gates Foundation Rock the World with OKRs. Penguin Press.

Castro, Felipe (2017). OKR Step by Step: How to Define and Implement Objectives and Key Results. Self-published eBook. ↩︎ - When a value stream is embedded within a larger value stream or portfolio, its OKRs should be aligned with the objectives of the higher level to ensure coherence and strategic consistency. ↩︎

- In many cases, the data and calculations were theoretically correct, yet users — those familiar with the process or involved in defining the measures — explained why the current implementation made little sense or produced misleading results. Often, people enter data with different intentions or no clear understanding of its purpose. In some cases, cumbersome tools or workflows lead users to provide incomplete or random inputs simply to progress through the submission or update process. ↩︎

- The topic of developing and operating a measurement system could easily fill another article — or become a focused part of a consulting engagement on its own. ↩︎

Author: Peter Vollmer – Last Updated on Januar 5, 2026 by Peter Vollmer